Think Twice Before Using Meta AI for Sensitive Info

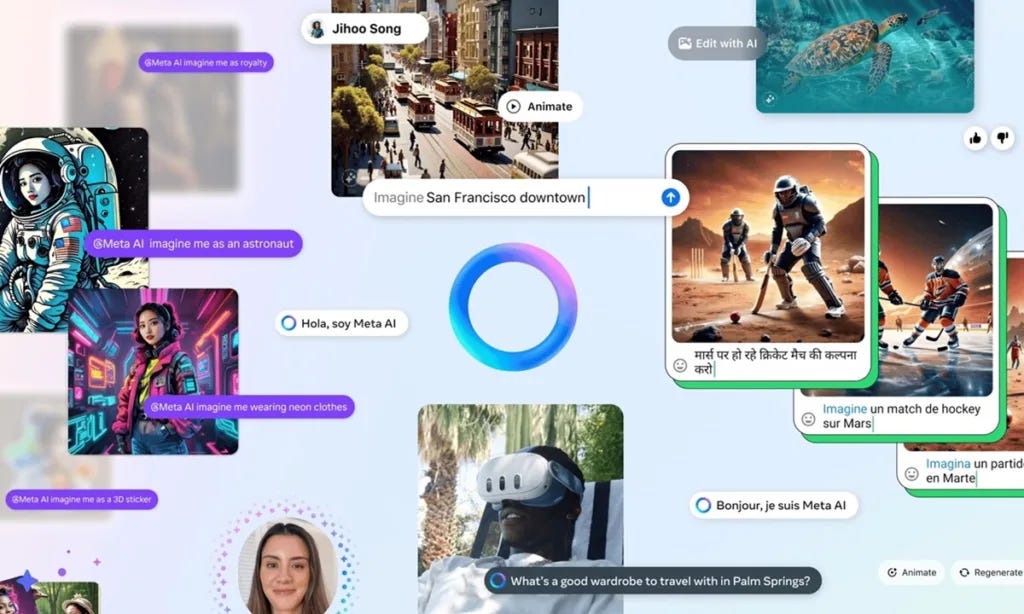

Meta AI sensitive data handling raises concerns. Learn why you should think twice before sharing private or critical information with Meta AI tools.

Meta has done it again. Just a few days have passed since we learned of the technique that Meta had been using to violate the privacy of Android users, and a new controversy has arisen, this time with the Meta AI assistant integrated into Instagram. While not a glitch, the situation has raised concerns about what many consider to be a serious user experience mistake: the unintentional posting of private conversations in a public space without the clear warning or proper mechanisms to prevent it.

It all started with the implementation of Meta AI in the Instagram application, where users can interact with the assistant to solve doubts, ask for suggestions, or generate content. The problem arises when, after generating a query, the platform offers the option of “sharing.” This seemingly innocuous button leads to a preview that, when accepted, automatically turns the exchange into a post visible to other users, without there being a clear warning about the implications of that action.

The shared content appears in the Discover section, a feed where Meta shows the highlighted interactions with its AI. Although this feature could be understood as a way to promote the visibility of the attendee, in practice it has worked as a trap for many users who were not aware that they were making their questions or answers public. Even worse, the publication occurs without subsequent notifications or an easy control to remove the content.

The consequences have not been long in coming. Several users have noticed how their own conversations appeared in the Discover feed, including extremely sensitive topics: from medical or legal issues to personal data and intimate questions. Cases that should have been kept under the cover of privacy have ended up being publicly exposed, fueling concerns that Meta hasn’t learned enough from its previous mistakes in this area.

While technically no data breaches have occurred, experts agree that the design of this functionality is misleading, to say the least. There is no clear warning that the conversation will be public, and the language used in the “share” button does not accurately reflect the action it takes. In environments where artificial intelligence is beginning to become a regular interlocutor, this type of ambiguity can have serious consequences for users.

Cybersecurity specialists such as Rachel Tobac have pointed out the problematic nature of this type of design decision, considering that it is a recurring pattern at Meta: interfaces that prioritize participation or visibility of the product to the detriment of clarity for the user. Organizations such as EPIC and Mozilla have demanded an urgent review of this behavior, even calling for the temporary suspension of the Discover feed until robust safeguards are in place.

For more check out this: https://technoluting.com/meta-ai-sensitive-data/